Guidelines for censoring online content could be silencing marginalized voices

In March of 2017, several big-brand companies, including Coca-Cola, Pepsi, WalMart and Dish Network, moved to suspend advertising on YouTube after expressing outrage over finding their advertisements preluding videos with racist, sexist and otherwise inappropriate and controversial content.

On Jan. 16, YouTube announced that “human reviewers would watch every second of video in … Google Preferred,” its list of top content that advertisers pay premiums to get their ads onto, as reported by Jack Nicas of The Wall Street Journal.

While the review will certainly be effective in removing sponsorship from channels that should not be highlighted, it does not come without risk as it leaves one big question unanswered: what constitutes inappropriate?

Google already employs a policing algorithm to sort through video content, but it is far from flawless.

Recently the company came under fire for pulling advertising from a channel named “Real Women, Real Stories,” which uses its platform to tell the stories of women who have been sexually harassed or abused.

The channel’s creator Matan Uziel was quoted by The Wall Street Journal saying that the decision to pull advertising cut off the project’s funding.

YouTube’s official “Advertiser Friendly Content Guidelines” state that any content featuring or focusing on sensitive topics or events including, but not limited to, war, political conflicts, terrorism or extremism, death and tragedies, and sexual abuse, even if graphic imagery is not shown, is generally not suitable for ads.

Taking these topics off the table for wider discussion is contrary to the unspoken role of the internet as a place for free expression and as a superhighway of information.

Cutting off funding for groups promoting important, unrecognized issues seems like a tragic casualty in the name of security.

However, leaving room for people such as Logan Paul to make money off of sickening jokes or images — in Paul’s case, footage of a suicide in Japan — seems equally wrong.

What is the greater risk: over-censoring or under-policing? Of course I think that no one should be rewarded in any way for promoting hate-speech and otherwise damaging content.

I don’t think that everything important necessarily qualifies as a nice subject.

Google’s company motto is “Don’t be Evil.”

So, is it evil to silence voices that ought to be heard? How about to give a platform to voices that need to be silenced? How do we determine which ones add depth to our society versus which ones stifle it?

These are weighted questions, but they carry with them a tremendous amount of significance. Finding these answers requires a standard of excellence, but I think it is one that we as consumers should expect to be tackled.

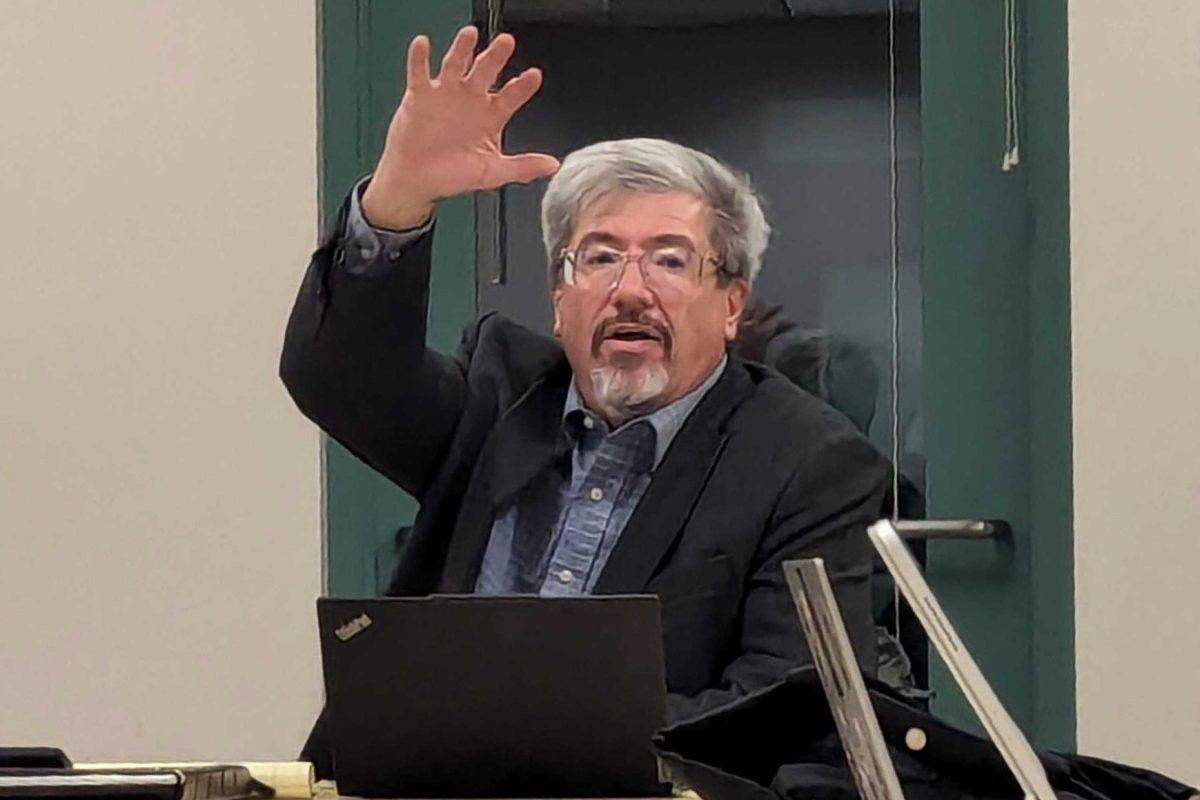

The gravity of the situation is best summarized by Sarah T. Roberts, an information studies professor at UCLA, who said that she’s “not sure [Google] fully apprehend[s] the extent to which this is a social issue and not just a technical one.”

Simply overcorrecting the problem in face of backlash won’t solve the core issue at the heart of this controversy. It requires a set standard on what we as a society are willing to tolerate, and that isn’t something you can ask a machine.

YouTube has publicly stated that users upload 65 years worth of content a day onto the site, so human review is completely out of the question.

However, in a world of self-driving cars, reusable space shuttles and drones beginning to pass for humans, it would seem that a feasible solution would not be too far out of reach.

Google ought to employ this new strategy not just through the eyes of an attorney looking to avoid liability, but rather through the eyes of everyday people looking for responsibility.

Discussing controversy is separate from creating it, and such distinctions should be honored in allowing our society to be diverse and dynamic.

Sexual harassment, racism and other societal diseases should be eradicated from our culture. When they do make appearances, it is imperative that we acknowledge the existence of the problem so that it can be dealt with.

The internet is the most efficient platform to do just that, with its accessibility and variety. It should be continued to be valued as such.

Though it is a private company, and no constitutional free speech clauses apply, Google should still hold this ethic and turn YouTube into a site where people can be safe, but continue to be free.

Let’s remove a trade-off from the equation, and instead use all the resources available to find a solution that gives its all.